After exploring z-vector last week, over the last few days I have been exploring how to integrate the Xbox Kinect with Quartz Composer. So far this technique has had limited success. I have been unable to get Quartz Composer to track my movements precisely. As you can see from the video below, I have been able to track a simple sprite to follow my hand, although it moves in a similar manner, it does not follow it as precisely as I hoped. This may be more down to me than the software and some of the maths involved, although after following tutorials on the subject, I have been unable to replicate the same precise movement.

Using a tool called Synapse I wanted to produce a simple particle trail that followed my right hand. Synapse allows you to use the Xbox Kinect to track a person(s) body movements and transpose that to data, which in turn can be used to control both visuals and sound.

Synapse utilises the Kinect’s inbuilt chip that recognises human shapes, and then tracks this information to a skeleton shape (in red) via various joints (black crosses). The processor can recognise a person(s) both standing and sitting. The software translates this into the OSC data protocol, which in turn can control various features of software that support OSC e.g. Ableton, Quartz Composer, Resolume, etc. This data can then be used for 3D mapping, tracking and control of any software parameter. This allows the use of the human shape as a controller. This is a key advantage of using this technology and technique.

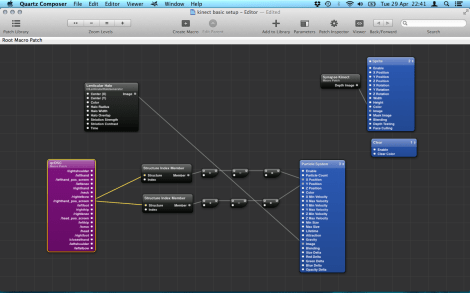

Each joint and limb sends data to the software which can be used to control various parameters. In the above screenshot, the myOSC patch has hand tracking outputs which are translated into X/Y movement of the particle. The translation requires a Structure Index Member patch, the output of which for X is 640 – 1.5 x 2 and for Y 480 – 1.5 x 2. These are then connected to the X & Y movement axis. In theory this should map the particle to my right hand movements. In reality I haven’t managed to get it to function correctly. I have varied the maths for mixed results and have so far been unsuccessful. I aim to explore this further and hope to get it working correctly soon.

The key advantage of this method is the huge level of control over a variety of parameters for both graphics and sound, this enables simple movements of the audience to be translated into a huge amount of control. This will enable the audience to interact with the work in a simple manner, a key aim of my final piece. The Kinect is quite an advanced 3D depth sensing camera at a very reasonable price. Key advantages of this technology over other methods of interaction are the fact the camera recognises human forms and skeletal shapes, and can pick these out from the background, this offers a hugely granular level of control, just through simple body movements.

The video below shows the process I went through in detail.

- Synapse, 2014: Synapse for Kinect Available at: <http://synapsekinect.tumblr.com> [Accessed 16 April 2014]

- St. Jean, J. (2013). Kinect Hacks: Tips & Tools for Motion and Pattern Detection. Cambridge, O’Reilly Media.