As the project enters the final stages of production, it seems like a good time to give a quick overview and description of the technical process involved in creating a 3D spherical projection. After the initial tests projecting onto a cube with flat surfaces, projecting onto curved surfaces posed further problems. How do you map the image onto a spherical shape? How do you project onto the entire surface of the sphere?

It seemed like a good idea to test the project out on a smaller sphere to ensure it worked on a smaller scale. The ideal surface to project onto would be white coloured. After researching I found a variety of websites specialising in large white polystyrene spheres in a variety of sizes – up to 10m!

This was the perfect object to project onto and after some initial trials using just one projector, the results were impressive. After the technical aspect of the project was tested and was found to work, it was time to really focus on the creation of the content of the project. You can read the initial ideas and storyboards here. Some of the ideas were potentially difficult to create using CGI, so space footage and microscopic footage was appropriated from public domain and copyright free sources. NASA/ESA footage is completely usable, but I have to ensure at the end of the project, credits are given for the material. The microscopic footage was taken from a film called Microscopic Life: The World of the Invisible (1958), which is in the public domain and copyright free, amongst a couple of other sources.

Motion 5

Other footage was created through still images and film that I captured of abstract objects and wildlife/plants over the last couple of months. After collating the footage the structure of the piece started to form based around the initial storyboards. Under the advice of my tutor, Prof. Suzie Hanna, I really wanted to keep the visuals as organic as possible, hence the predominant usage of real footage as opposed to CGI. I loaded a variety of still images into Apple’s Motion 5 vfx software and created a variety of animations. In the image below you can see the creation of a very simple animation of a rotating flower.

Resolume Arena

After gathering all of the clips together, both found and created, they were loaded into some media server/VJ software called Resolume Arena. This software works very much the audio software Ableton Live, and allows you to trigger either individual or multiple loops of video in sync with audio. It works with layers of video, onto which you can apply a variety of blend modes to mix them together. It is a very powerful piece of software, and even though all the video I gathered and created was 720p, my 2009 13″ MacBook Pro handled it fine. You can also apply a variety of effects, transitions and basic projection mapping. Each time the piece is performed live it is always slightly different.

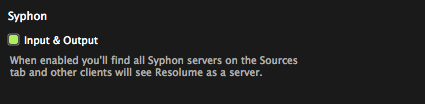

Syphon and MadMapper

Syphon is the video equivalent of Propellerheads ReWire. It enables you to send a video stream from one application to another. Arena has a very simple setting in the preferences, which once enabled, sends the video to the application of your choosing.

MadMapper enables a far more advanced ability to projection map onto far more complicated objects and shapes than the more basic features built into Arena. In particular for Sphere, it was very important to be able to projection map onto a sphere, using multiple projectors and distort the image to create the illusion that the graphics are coming from within the object.

Once you have a video input enabled in MadMapper, it is then a case of setting up the mapping in the software, onto the shapes onto which you wish to project the images. It also allows you to distort the images in 3D. This step requires a lot of patience and trial and error to get right. The first test was shot with a single projector and a single static camera. The problem with this is the object is intended as 3D video installation. This did not enable the viewer to get a sense of depth of the object. After a group critique with Mark Aerial Waller, one of the tutors at NUA, I decided to get hold of some extra projectors and record it to a substantially better standard. This setup was even harder to get right, but once it was working, the results were even better.

Filming Sphere

As mentioned before, each performance of sphere is slightly different. To get a sense of depth to the project as it will be submitted as a video (although it will be exhibited as an installation piece as intended in Norwich next year), I thought it best to record a multi camera shoot of the project and edit afterwards. Due to limitations on equipment I currently only have access to one Canon 600D to film the project. Arena has an inbuilt recorder, so I recorded a performance of the piece, and then played this back 6 times. Each time I recorded a different angle of the piece, to edit later. I am currently in the process of editing the final piece using the superb multicamera editing tools in Final Cut Pro X. I will discuss this in a little bit more depth in my next post.

Obviously there has been a lot more work behind the scenes than maybe this technical description conveys, but this should give a basic overview of the creation and recording of the final piece.